X just open-sourced its algorithm again. This time it's one model.

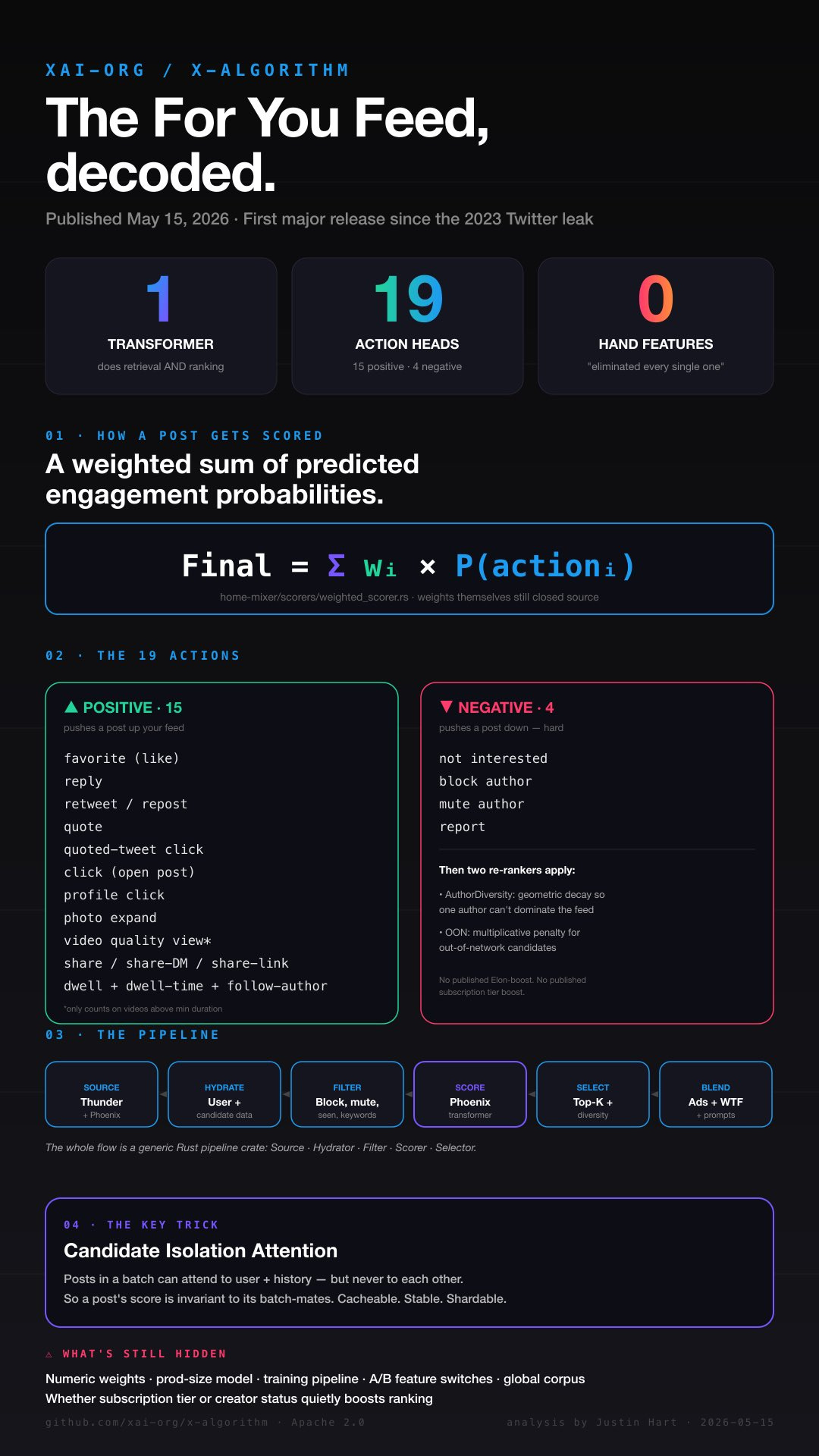

A code-level walk through xAI's May 15 release of xai-org/x-algorithm — and what it means if you're trying to be seen on the platform.

When Twitter open-sourced “the algorithm” in March 2023, it was a sprawl. Scala services everywhere. Heavy Ranker. SimClusters. TwHIN. RealGraph. Earlybird. Hundreds of hand-engineered features. A leaked file showing that a “reported” post got a weight of roughly -369 while a reply with author engagement got +75.

It was a system that read like a city — built in layers, by different teams, over a decade.

This morning xAI published xai-org/x-algorithm, the For You feed’s source code as it runs today. It does not read like a city. It reads like one transformer model and a handful of pipes around it.

The README puts it bluntly:

We have eliminated every single hand-engineered feature and most heuristics from the system.

That is the headline. Everything else in this post is what falls out of it.

The new shape: one model knows you, one model scores everything

The new system has a name — Phoenix — and it does two jobs.

First, retrieval: given who you are, find a few thousand candidate posts out of the global stream that might be worth showing you. Phoenix does this with a two-tower transformer: one tower turns you into an embedding, the other turns every post into an embedding, and a dot product picks the closest matches. No more SimClusters communities, no more RealGraph predicting who you’d engage with. Just embeddings.

Second, ranking: given those candidates, predict how likely you are to do each of 19 different things to each one. Then sum those probabilities into a single score and sort.

That’s it. That’s the system.

The supporting cast is small:

Thunder holds posts from accounts you follow, in memory, with sub-millisecond reads.

Grox is a separate worker pool that classifies posts as they’re created — is this spam, does it look viral, is it adult content, what does the video say, what does the image show.

Home Mixer is the orchestrator in Rust that glues retrieval, ranking, filtering, ad placement, and “Who to Follow” injection into one gRPC service.

Compared to 2023’s architecture diagram, which looked like a subway map, this one is a straight line.

What the model actually scores you on

The final score that determines whether a post lands in your feed is, mathematically, dead simple:

Final score = Σ (weight × predicted probability)

The 19 things the model predicts probabilities for:

Positive signals (15) — these push a post toward you: favorite, reply, retweet, quote, quoted-tweet click, post click, profile click, photo expand, video quality view, share, share-via-DM, share-via-copy-link, dwell, dwell time (continuous), follow author.

Negative signals (4) — these shove a post away from you, hard: not interested, block author, mute author, report.

A few things worth lingering on:

Share is broken into three. “Generic share button” is separate from “share to a DM” is separate from “copy-link share”. You can imagine the weights moving independently — share-via-DM signals genuine “send this to my friend” energy, copy-link signals “I’m pasting this somewhere”, and the share button signals neither. Different weights mean xAI can boost one without boosting the others.

Video views only count for long-enough videos. If you slap a two-second video on a text post to chase the video-view signal, the code explicitly drops the VQV weight to zero unless the duration crosses a minimum threshold. That’s a deliberate defense against people gaming the video score with stub clips.

Dwell is measured twice. Once as “did you dwell” (a yes/no probability) and once as “how long did you dwell” (a continuous regression on milliseconds). The model is trying to learn both shapes of attention.

The numeric weights are not public. Same as 2023 — the constants FAVORITE_WEIGHT, REPORT_WEIGHT, etc. live in a params module that didn’t ship with the open source release. We can read the formula. We can’t read the magnitudes.

What the model no longer scores you on

This is where it gets interesting for anyone who’s been gaming X for distribution.

There’s no hand-coded recency boost anymore. Post age is fed to the model as a learned embedding (60-minute buckets, up to about 80 hours). Whether and how to penalize age is something Phoenix figures out from your behavior. If you generally engage with older posts, Phoenix learns that. If you only ever like things less than an hour old, it learns that too.

There’s no SimClusters tribal lift. Posts don’t get boosted because they’re popular within a community you belong to. They get boosted because the transformer thinks you specifically will engage.

There’s no published creator-tier multiplier. Subscriptions and verification are used as filters (you can’t see paywalled content if you’re not subscribed) but they do not appear anywhere in the public scoring code as a boost. Whether Phoenix’s hash embeddings implicitly learn “this author is a paying customer” is a closed question. But the explicit code that used to nudge specific creator categories upward in 2023 is not in this release.

There’s no published Elon-boost. In 2023, there was a literal special case in the telemetry that tagged whether the post’s author was Elon Musk. This release has none of that. Whether it’s hidden in the closed weights is anyone’s guess, but the open code is clean.

There’s nothing for hashtags. Nothing for keywords. Nothing for hour-of-day. Nothing for “engagement velocity in the first 5 minutes.” None of those engineered features survive. The transformer reads your engagement history and decides.

What this means if you’re posting on X for distribution

A few things I’d actually do differently knowing this:

1. Optimize for reply with author engagement. This was the highest-weighted action in the 2023 leak, and there’s no signal it’s been demoted. The new system has the same reply prediction head plus a follow-up that includes whether the author engages back. If your post sparks a reply and you reply to that reply, that’s the goldmine event. Comment on your own posts.

2. Stop chasing stub videos. The minimum-duration gate on VQV means a 2-second video attached to a text post buys you nothing on the video score. If you’re going to use video, make it long enough to count.

3. The “save and share to a DM” signal is now its own thing. Posts that get sent in DMs are likely weighted differently from posts that get the share button tapped. Write things people actually send to friends. That’s a higher signal than a like-and-scroll.

4. Dwell time really, really matters. The model has two dwell heads. Long, layered posts that hold attention are more visible to Phoenix than short punchy ones that get a quick like-and-scroll. The image-or-thread-with-context format wins over the one-liner format here.

5. Negatives are nuclear. Block, mute, and report aren’t soft signals — they’re explicit negative-weight heads on the prediction model. One block does more harm than ten likes do good. If you’re posting things that consistently trigger these on a subset of viewers, you’re not just losing those viewers; you’re poisoning your distribution for everyone.

6. The model is learning you, not the platform. This is the real headline for operators. There is no master “what works on X” anymore — there’s only “what works on X for the people Phoenix has decided are your audience.” Two creators with identical posts will get very different reach, because Phoenix is scoring against the engagement history of each individual viewer, not against a universal recipe.

The honest caveats

A few things to keep in mind before you treat any of this as gospel:

The numeric weights are still closed. Until we see them, all the relative ordering of “what matters most” is informed guesswork.

The production model is bigger than the released one. The open-sourced Phoenix is a mini version — 128-dim embeddings, 4 layers. Production has more layers and wider embeddings. The architecture is the same; the capacity is not.

The model is trained continuously in production, then snapshotted for this release. The frozen open-source checkpoint will get stale. Don’t over-fit your strategy to its specific behavior.

Anything could be re-introduced quietly. Hand-engineered features could come back through the closed weights or through feature-switch experiments. “We eliminated all of them” is a statement about this code, not a long-term commitment.

The bigger picture

The interesting thing about this release isn’t really X. It’s that this is what recommendation systems are starting to look like across the industry — TikTok, Meta, YouTube. The same shape. One big multi-action transformer fed by a behavior sequence, learning end-to-end, replacing decades of hand-crafted features.

You’re not being scored by an algorithm anymore in the old sense. You’re being scored by a model that’s silently learned what you specifically tend to engage with, and the only consistent advice that survives is: make things that the kind of person who likes your stuff actually wants to send to their friend in DMs.

The rest is closed weights and continuous training.

P.S. — I walked through the whole codebase line by line. If you want the full breakdown — every scoring head, every filter, every hydrator, the exact attention-mask trick that makes the model cacheable, and a complete 2023-vs-2026 diff — I posted the technical version here: justinhart.biz/blogs/x-algorithm-2026. There’s also an infographic if you’d rather just look at one picture.

P.P.S. — The repo is at github.com/xai-org/x-algorithm, Apache 2.0, ~1.5 MB of source plus a 3 GB pre-trained mini model in Git LFS. You can actually run retrieval and ranking on your own machine with a few uv commands. The example user history is three sports likes. Swap it for yours and watch what Phoenix recommends.

May marks 4 years of me being blocked from Xitter.